Introduction

Being able to see through the night like a cat and or to see the invisible, having a compass in the eyes, having more information about the people around us, this is part of the dream of augmented vision.

I remember, the first time I was fascinated by such an idea was in the anime Dragon Ball of Akira Toriyama. The Sayian warriors had a device called “The Scouter”. This device really looked like the actual smart glasses. This tool helped the Sayians analyse the power of their opponents before a fight and locate them in the universe. The design was pretty simple, a big earing to communicate with other Sayians in the universe. But according to the images (Youtube: How works the scouter), there is no way to fix it on the face, no hoop, so i suppose the ear zone works like a suction cup.

In 1989 Akira Toriyama imagines the Scooter in Dragon Ball

In 1991, in the movie Terminator 2, we could already discover a nice augmented vision idea, being behind the robot eyes.

Augmented vision of the Terminator in Terminator 2 in 1991

Today this dream of augmented vision is a reality and a lot of companies are working on this practice with different approach :

Some companies building smart Glasses

The augmented vision will be enhance so many industries. We will find applications for winning time in industrial tasks and maintenance. Helping surgeries in complex operations to have informations linked to their patients directly on the operating table. For the army, to have more informations on the battlefield, have assistive pilote, operation supervision, logistic support or fight simulations. And many other using for journalists, police, sport…

Some people consider this new device as a simple gadget for geeks. But as we have seen with previous technological waves (computer, Walkman, smartphones… ) they radically change people’s behavior. And we can easy imagine a world where most of people are equipped with such a device on the face. Even if this new technology is great, it includes many different challenges, questions to focus on and imagine such a tool spread to all the population.

Security / Privacy

First of all, there are a lot of ethic questions for daily personal use. Augmented vision will change our way to interact with people. Even if glasses can enhance the information we have around, it is also a system to capture information: we will be able to record everything, everywhere.

Today some places, such as bars, prohibit the Google Glass wearing for the safety and privacy of their clients. Some people consider we are already too often behind a mobile screen and that social interactions became less interesting and authentic. That the technology is going too far. With glass, we will be all the time behind a screen. It’s clearly a question of human and behavioural evolution and I don’t think the question is “is it going to happened” but “how can we create it to improve it”. In the History, the human being has never taken the decision to curb technologically.

This sarcastic video showing how glass could change a simple date in a coffee asks some interesting questions:

Hacking is an actual big trend topic and it’s in every electronic project. Everyday we hear a new big company or a government being hacked. Every computer seems to be hackable. Today it’s already not that difficult to spy someone by hacking a distant webcam. Hacking glasses could be like hacking the eyes of everyone, hackers or potential Big Brothers will be able to watch what people see.

By extrapolating, there is also the problem of capturing information all the time in a perpetual recording. Do we want that ? Do we need that ? The world is probably going into a full open life full of transparency without any privacy. This issue created a really important debate since 2010 some years, when Facebook founder said Privacy no longer a social norm. Other link : Privacy is dead and it’s not a big deal.

Health

The studies results about the impact of Wifi waves on the brain seem clear and shocking. They can negatively affect overall health and brain health, especially in children. Wearing a warm connected machine on the head, so close of the brain, all the time might be dangerous.

A lot of people are already tired to wear contacts, so what will the effects with a screen in front of the eyes all day. Even if we don’t need the glasses to be active 100% of the time. We need to ensure that there is no risk and determine limits of time using.

Observations

I had the opportunity to try two different models : the Laster Seethru and the Google Glass. As prototypes, we can already feel some good and bad practices. Most of the feedbacks are about the Google Glass that has been spread and tested by more people around the world. Here is a mix list of personal and read observations about online testers.

| ADVANTAGES | WEAKNESSES |

|---|---|

| very useful for the GPS when you drive | battery is quickly empty |

| taking picture or record video while you move is very easy | Impression to have a screen inside a screen. I would like to try a version with a bigger prism that takes all the visual field |

| Bone Conduction Transducer (BCT) sound system is interesting | repeating "Ok Glass" very often is weird |

| touching the side of the glass to scroll is not natural | |

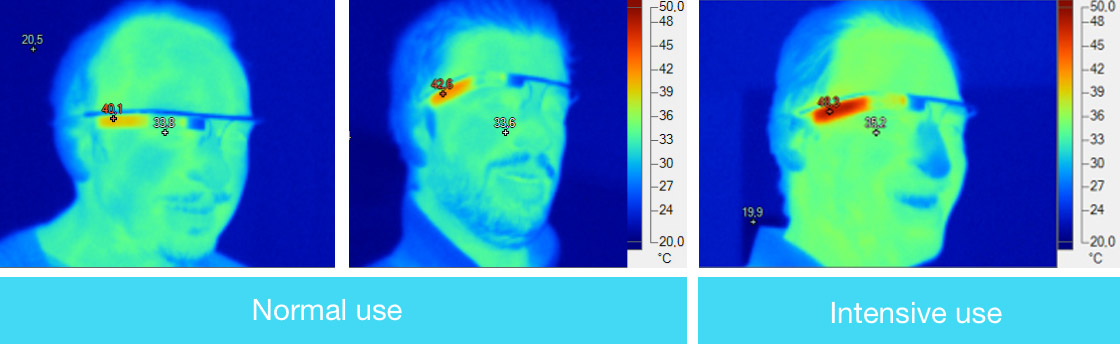

| battery temperature (i didn't observe it personaly when I used it but I've read interesting feedback about it. Intensive use means calls or GPS using) | |

| hide a part of the face. Eyes are the beautifulest part of a face and with this fixation they are hidden. |

Impression to see a screen inside a screen

Google Glass temperature Test by LesNumeriques.com

When i tried both models, it didn’t fit on my head very well, I would probably prefer to have a hoop that is well fixed when I move my face. And I think we could find a way to avoid the other head size part that as no other utility than wearing the object

Here is a little research I made about an ergonomy idea :

The first version of Google Glass was based on small layer in a corner of the eyes that gives a more direct access to informations of the phone. But augmented reality vision goes further than that. In addition to avoiding the use of hands, the Glass of the futur must give informations about the direct environment and become a new media for in many practices.

I – INTERACT WITH GLASS

The objective of Glass is to free the user’s hands, facilitate access to the information around him and keep him connected with his digital life. Today the interaction with Glass are essentially voice recognition with touch or the right side glass case. Some little movements such as wink and head shake are available for special features. These interactions are restrictive and don’t allow all actions.

The different ways to interact with Google Glass

Here, I describe the advantages and weaknesses of the different types of interactions.

a) Voice Recognition

| ADVANTAGES | WEAKNESSES |

|---|---|

| direct access | not discreet |

| avoid hands using | direct discussion VS glass commands (repetition not natural) |

| need to display each command lines if you don't know them by heart | |

| other person can command your voice with their voice |

Voice Recognition can’t be the only way of interaction.

recognition for each commands”

b) Hands Gestures

The gesture of the actual glass are efficient for the actual model. But it requires a real initiation to use them. It’s not as evident as using a smartphone. The project Soli can be an amazing tool and will aloud new kind of interactions.

| ADVANTAGES | WEAKNESSES |

|---|---|

| can be natural for some features | hands are not free… |

| can be very weird in the public area | |

| effort on the muscles & natural body postures |

The Project Soli at Google can really help to improve scale and built into small devices and everyday objects

In Minority Report, Tom Cruise needs a real knowledge to use his screen

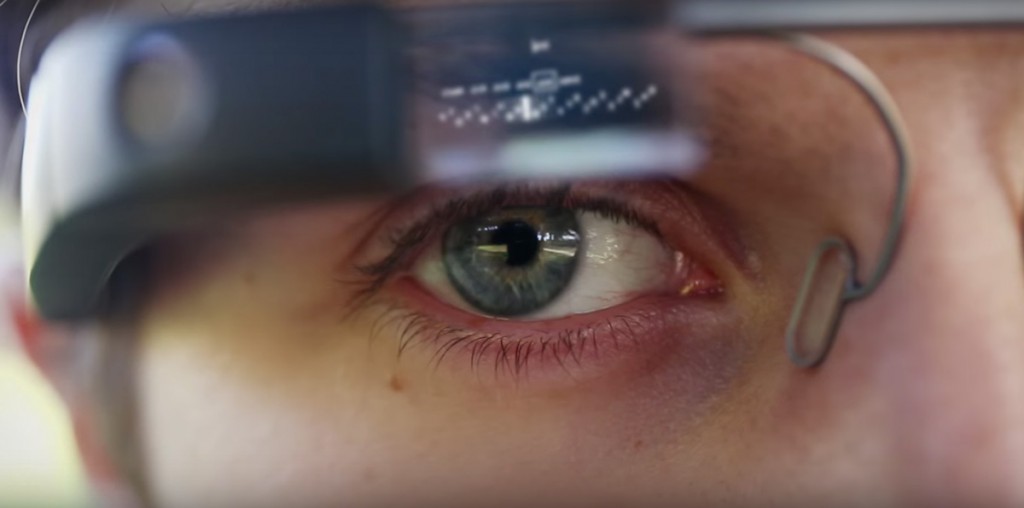

d) Eyes focus

The eyes could work as a computer mouse. Target with the eyes and use a eyebrow movement to click.

| ADVANTAGES | WEAKNESSES |

|---|---|

| evident & direct | can strain the eyes |

| very good precision |

Even if the technologie is not effective yet, it is towards this direction that we must strive for the default interactions. The others senses can come as specific support or for complementary access.

The challenge to create interaction only based on eyes focus, generate some questions :

– How to navigate easily in the new system with only eyes ?

– How to use some commands with the eyes ? (ex: keyboard…)

– Is it a natural gesture ?

– Does it create ocular problems in the short / medium / long term ?

II – Architecture

Even if this analysis needs lots of testing, I try here to have a reflection on a general architecture that could be part of basic guide lines for the development of future applications. In this example, I assume that the user has a glass on each eye and these glasses have the size of traditional glasses.

For this work, I chose a context of daily use : a sort of evolution of the first version Google Glass. This context is the most complex because he includes very diversified parameters management :

– indoor / outdoor

– mobility / passive

– multi-applications using at the same time

– obstacles

But i think the short guide lines are generic and opened and can be used in different contexts (professional and hobby using)

General layers architecture

1) Mobility & Visual zone of walk

If the user needs to use his Glass anytime, he need to have a safe visibility while walks. It is necessary to have a vision without barriers or visual noises on his way. For security reasons, it is important that this zone is restricted to blocking informations.

This zone can display blocking information such as long text (emails, news…) if the user is passive, if he doesn’t need to have a visibility in front of him. Theses cases appears when he is sat.

2) Augmented Reality & Passive Mode Layer

This zone covers all the glass visual field. It is the main layer. It can shows two types of informations depending of the mode of the Glass :

– in Walking mode : only augmented information directly connected with the reality nearby

– in passive mode : it can displays blocking informations, the type of informations that highly decreases the security of the user

The Glasses should be able to automatically detect if the user is in Walking Mode or Passive Mode.

Examples of apps we could use on this layer :

– geo-markers

– augmented artistic exhibitions

– games

– reading long information (emails, news)

– karaoke

– auto-translation

– record a video

– take a picture

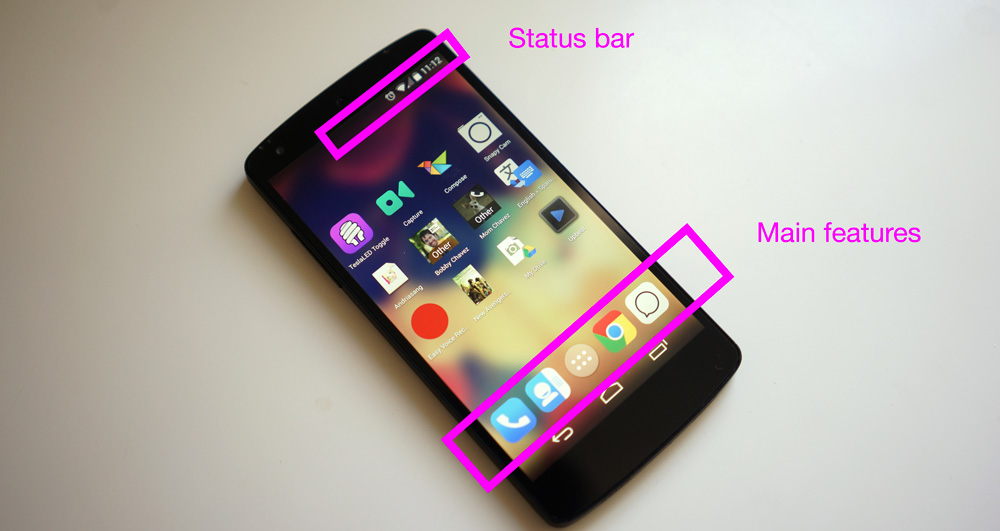

3) Permanent informations layer

This informations are the information that we need to access very often such as date, hour, battery life, network signal and menu.

It’s a kind of mix with the main info of the status bar and the main apps of the actual smartphones.

![]()

4)Non-blocking informations

These informations are all displayed all around the walk visual field (sides and top). The kind of support informations you want or need to check often and that don’t block your walk. These types of applications could be displayed on these blocks :

– short notifications

– audio or video conference calls

– notifications (agenda, applications,….)

– map & GPS Navigation

– quick search results

– music management (in shuffle mode)